How to Debug GPT-4 Responses: A Practical Guide

As large language models (LLMs) like GPT-4 become integral to applications which range from customer support to research and code generation, developers often face an important challenge: GPT-4 improvements over GPT-3. Unlike traditional software, GPT-4 doesn’t throw runtime errors — instead it may provide irrelevant output, hallucinated facts, or misunderstood instructions. Debugging therefore uses a structured, analytical approach.

This guide walks through essential strategies to diagnose and fix issues when GPT-4 is not responding as you expected.

🔍 1. Understand the Root Cause

Before trying to fix an undesirable response, pinpoint why it happened. Most GPT-4 failures belong to predictable categories:

Issue Type Symptoms

Prompt ambiguity Vague or off-topic answers

Context overflow GPT “forgets” earlier information

Hallucination Invented facts or confident false claims

Misaligned format Output missing required structure

Missing constraints GPT becomes too creative or general

Knowing the cause helps you pick the correct debugging strategy.

🧠 2. Examine the Prompt Step-by-Step

A surprising quantity of failures originate from prompt structure. To debug:

Remove unnecessary instructions

Isolate each request into separate sentences or bullet points

Check whether your needs contradict one another

Re-order the prompt to place the most important instructions first

Example fix:

❌ “Write a write-up quickly but also include citations and a full technical glossary whilst it under 500 characters.”

✔️ “Write a compressed article (max 500 characters). Include one citation. Include a short glossary.”

Good prompts reduce the chance of GPT-4 hallucinating or misinterpreting instructions.

📌 3. Use Explicit Output Formatting

When GPT-4 produces inconsistent or messy responses, force structure through formatting instructions.

Examples:

“Respond using markdown headings.”

“Output only JSON, without commentary.”

“Give a table accompanied by a summary paragraph.”

Providing templates is better yet:

"title": "...",

"summary": "...",

"steps": [

"step1",

"step2"

]

Clear structures reduce guesswork and increase reliability.

🔁 4. Apply Iterative Refinement

Don’t try and fix everything immediately — debug progressively.

Ask GPT-4 to gauge its own response

→ “Did you miss any instructions from your prompt?”

Ask what info it needs

→ “What clarifications would allow you to generate a much better answer?”

Request a revised version

→ “Rewrite the response following original constraints.”

GPT-4 is frequently surprisingly proficient at correcting itself when guided.

📏 5. Manage Context Length

If you’re using long conversations or large documents, GPT-4 may drop early instructions as a result of context limits.

Tips:

Use summaries as an alternative to full history

Restate key constraints frequently

Pass essential data as structured input as opposed to narrative text

Debugging context issues is essential for production apps.

🧪 6. Test Variations Systematically

Treat GPT-4 while you would any component under test:

Keep a library of prompt versions

A/B test temperature and system prompt values

Freeze test cases to trace changes between model versions

Store both successes and failures

This prevents regressions and ensures predictable performance across updates.

⚠️ 7. Identify and Mitigate Hallucinations

When GPT-4 invents information confidently:

Require real citations (“link + source name + date”)

Ask for uncertainty in the event the answer is unknown

Set the model role to analyst rather than expert

Reduce temperature

Example safety prompt:

“If you happen to be unsure, say ‘I don’t know’ rather than guessing.”

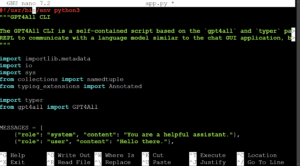

🧰 8. Use System Prompts for Core Behavior

System prompts act as the foundation of GPT-4 behavior.

Examples:

“You are a precise scientific assistant who never invents sources.”

“You always answer concisely with bullet points unless asked otherwise.”

Debug Base Prompt → Debug Output.

Debugging GPT-4 is less about fixing code plus more about refining communication. The most reliable results come from:

Clear structure

Explicit constraints

Controlled creativity

Iterative testing

Strong system prompts

As LLMs always evolve, prompt engineering and debugging can become essential skills for developers, researchers, and content creators.